These notebooks have been created by Jonathan Chung, as part of his internship as Applied Scientist @ Amazon AI, in collaboration with Thomas Delteil who built the original prototype.

git clone https://github.com/awslabs/handwritten-text-recognition-for-apache-mxnet --recursive

You need to install SCLITE for WER evaluation You can follow the following bash script from this folder:

cd ..

git clone https://github.com/usnistgov/SCTK

cd SCTK

export CXXFLAGS="-std=c++11" && make config

make all

make check

make install

make doc

cd -You also need hsnwlib

pip install pybind11 numpy setuptools

cd ..

git clone https://github.com/nmslib/hnswlib

cd hnswlib/python_bindings

python setup.py install

cd ../..if "AssertionError: Please enter credentials for the IAM dataset in credentials.json or as arguments" occurs rename credentials.json.example and to credentials.json with your username and password.

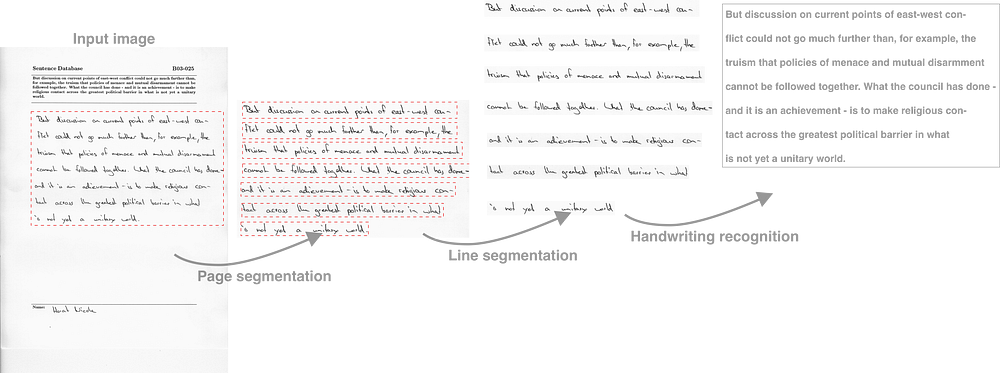

The pipeline is composed of 3 steps:

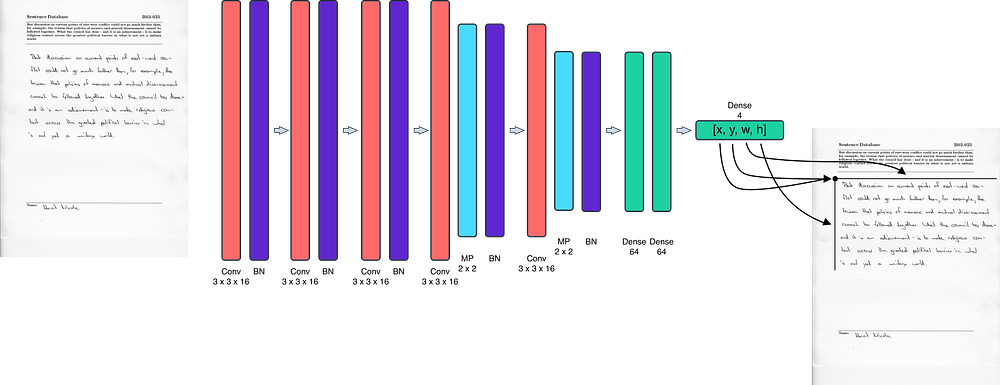

- Detecting the handwritten area in a form [blog post], [jupyter notebook], [python script]

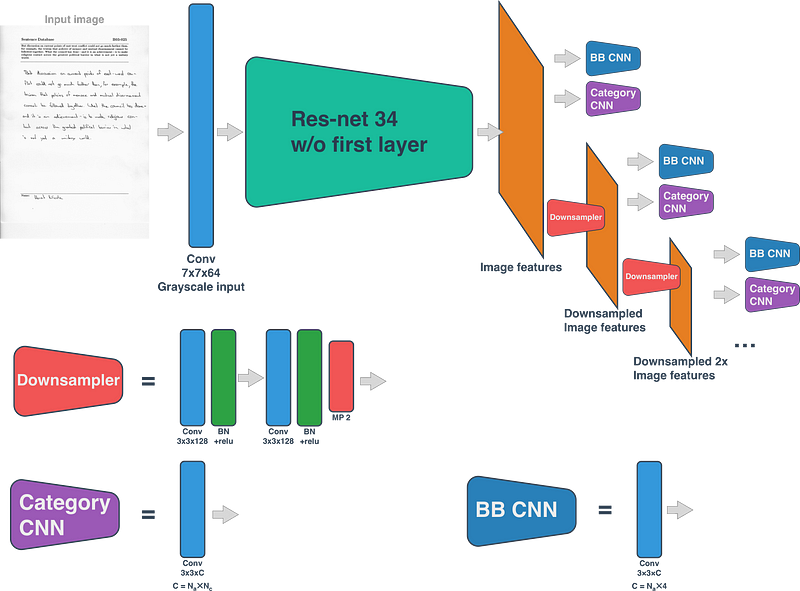

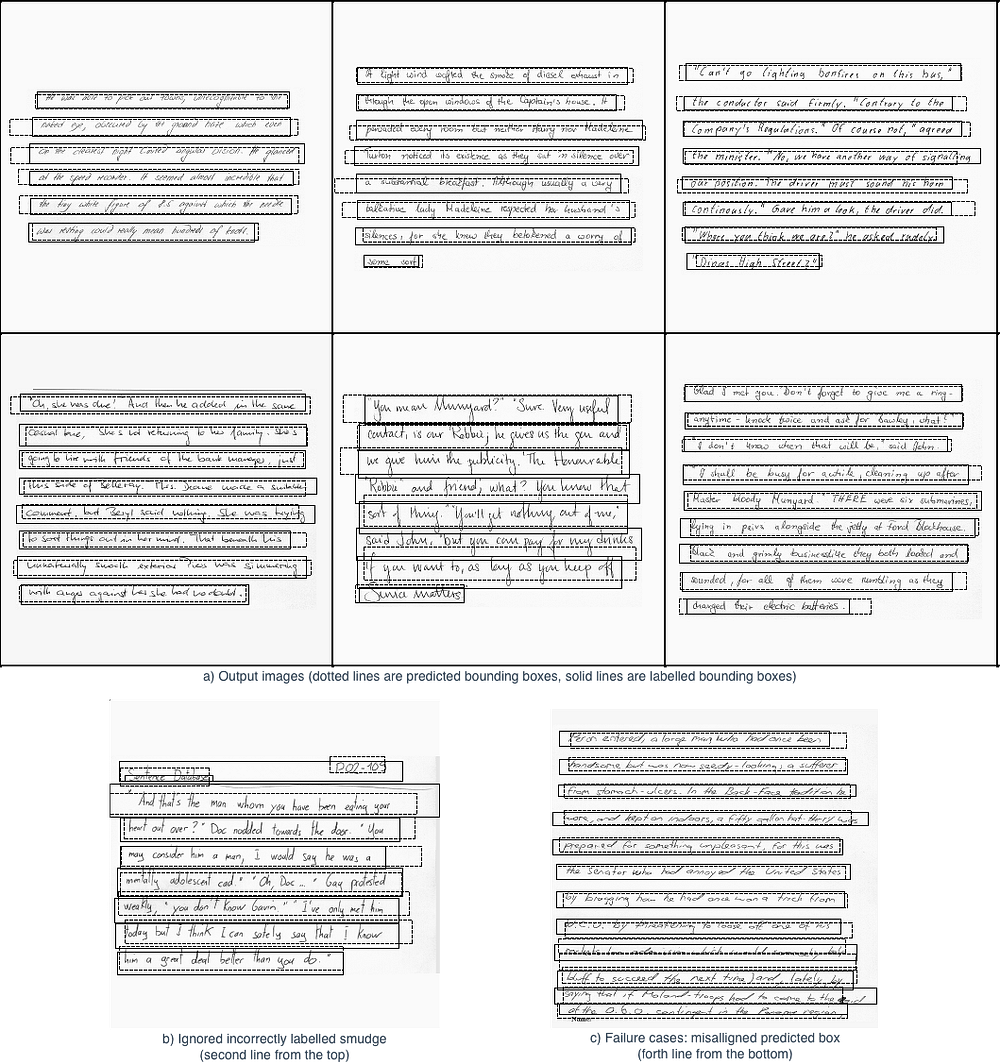

- Detecting lines of handwritten texts [blog post], [jupyter notebook], [python script]

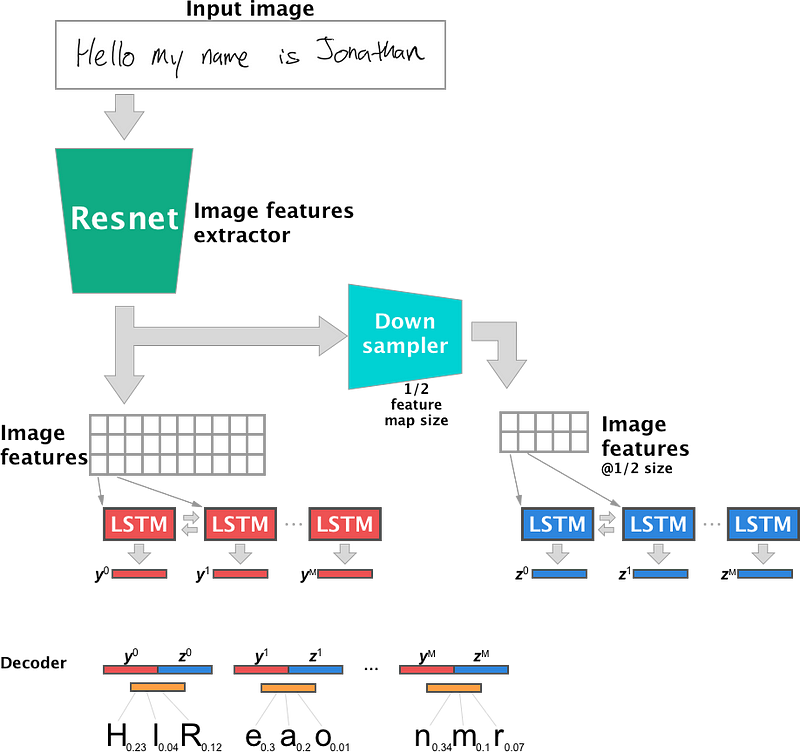

- Recognising characters and applying a language model to correct errors. [blog post], [jupyter notebook], [python script]

The entire inference pipeline can be found in this notebook. See the pretrained models section for the pretrained models.

A recorded talk detailing the approach is available on youtube. [video]

The corresponding slides are available on slideshare. [slides]

You can get the models by running python get_models.py:

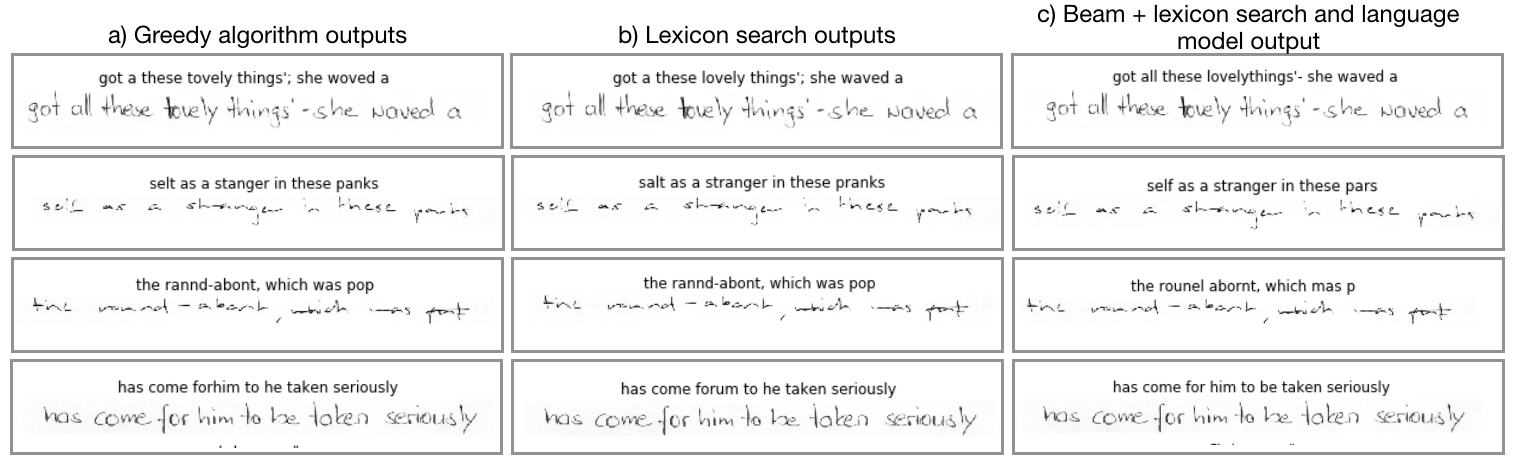

The greedy, lexicon search, and beam search outputs present similar and reasonable predictions for the selected examples. In Figure 6, interesting examples are presented. The first line of Figure 6 show cases where the lexicon search algorithm provided fixes that corrected the words. In the top example, “tovely” (as it was written) was corrected “lovely” and “woved” was corrected to “waved”. In addition, the beam search output corrected “a” into “all”, however it missed a space between “lovely” and “things”. In the second example, “selt” was converted to “salt” with the lexicon search output. However, “selt” was erroneously converted to “self” in the beam search output. Therefore, in this example, beam search performed worse. In the third example, none of the three methods significantly provided comprehensible results. Finally, in the forth example, the lexicon search algorithm incorrectly converted “forhim” into “forum”, however the beam search algorithm correctly identified “for him”.

-

To use test_iam_dataset.ipynb, create credentials.json using credentials.json.example and editing the appropriate field. The username and password can be obtained from http://www.fki.inf.unibe.ch/DBs/iamDB/iLogin/index.php.

-

It is recommended to use an instance with 32GB+ RAM and 100GB disk size, a GPU is also recommended. A p3.2xlarge would be the recommended starter instance on AWS for this project